Reader

Introduction

Computational methods establish a number of ways to approach how readers read and interact with literature, and it is an area in which the publishing industry is also investing heavily to obtain a better understanding of preferences.

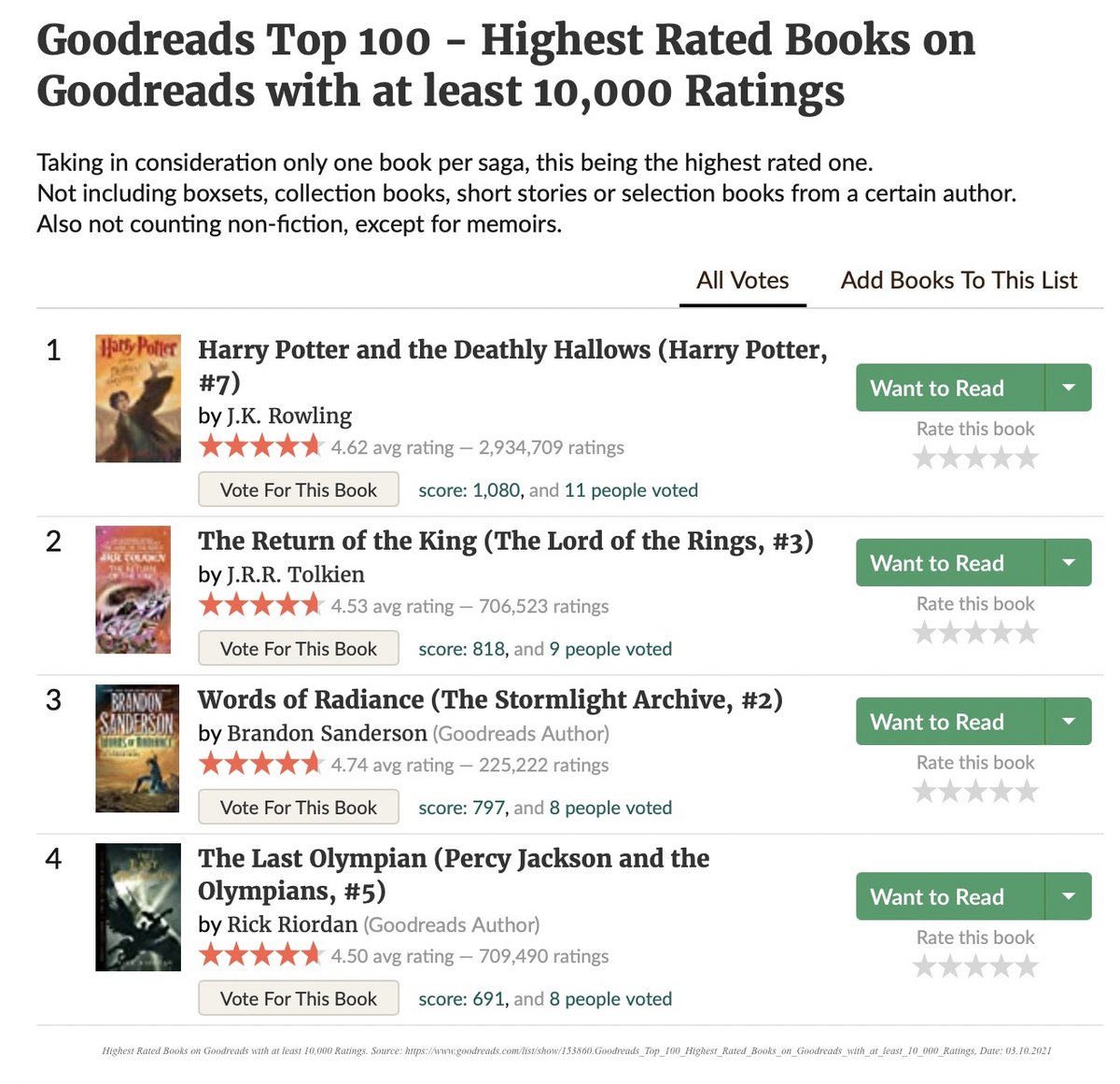

Huge amounts of data are collected on how far people read in a book that they have purchased online and whether it is possible to predict which books they never purchase. Most of these data are not publicly available, but much else is. Online fora such as Goodreads let readers comment on books, rate them, and this gives much broader access to the thoughts and impressions of lay readers than ever before.

Some projects have analysed the language of reviews, whereas others have studied how readers retell novels. To understand readership, it is also possible to turn to translation databases, sales figures, and search metrics, each of which makes it possible to claim processes of canonization with much greater force than was possible until recently.

Finally, the study of reading has been taken up by cognitive science which has found an interesting topic in the reading that can be tracked through brain scans as well as by tracking eye movements.

Applications

Elementary

The digitization of texts has led to a variety of new ways to read and engage with literature, with E-books and E-book readers being a prominent example. Several sites and programs such as the Amazon Kindle gather data that the reader generates by simply reading digitized texts, storing information about for example how fast the reader’s average reading speed is, whether this speed rises or falls in certain passages and about the length of reading sessions. While all this data are gathered somewhat passively, a tool like Amazon Kindle’s highlighting tool, Popular Highlights, allows for their readers to engage with digital literature in a more active way, by enabling their readers to highlight certain passages and making it visible which passages have been highlighted most frequently. See Lauren Cameron’s “Marginalia and Community in the Age of the Kindle: Popular Highlights in The Adventures of Sherlock Holmes'' for a larger discussion of the strengths and weaknesses of Kindle’s Popular Highlights, as well as its relation to other historical interpretive communities.

Indeed, this making visible readers' shared but diverging reading experiences is one of the biggest promises of digital text and reading in the 21st century, around which another easily accessible tool, Prism, is modelled. Prism allows its users to upload texts and create distinct categories, and readers are then asked to put every word and passage of the text into these predefined categories. In this process, they will contribute to a visualisation of both the text and the reader responses, since every word of the uploaded text is colored according to the category it has been most often associated with, and a circle diagram will simultaneously display what percentage of readers chose a certain category.

Advanced

The abundance of the reader-written, social media reviews and comments also expands the possibilities for quantitative analysis of the reader. Aggregated together, personal opinions can lead to insights about a broader reader consensus of how a work is received.

There are various approaches to analysing this data. For instance, Shahsavari et al. (2020) used Goodreads reviews to generate “consensus narrative frameworks” that capture overarching narratives of books with the most important actants (i.e. characters), and their inter-actant relationships. These kinds of networks can be revealing about how a story is perceived and, above that, what is remembered from books. Another example compares reviews from Goodreads and Amazon to decouple book reviewing from book selling (Dimitrov et al., 2015). The findings suggest that the two platforms elicit quite different reader behaviour, depending on the underlying motivation. Amazon reviews appeared to be more extreme in sentiment, and the used vocabulary was more purchase oriented.

Using Goodreads reviews, scraped with the Goodreads API, conduct your own sentiment analysis. The nltk package for Python provides a sentiment package and sentiment lexicons to implement this. To plot your findings, have a look at the graphs at this sentiment analysis project on Github. Alternatively, you can train a machine learning model predicting genre based on the reviews, following this tutorial.

Resources

Scripts and sites

-

A tutorial on predicting genre from Goodreads reviews.

-

A tutorial for webscraping Goodreads reviews and analysing the data using the R programming language.

-

A tutorial on exploratory data analysis and sentiment analysis from reviews scraped from Goodreads (using the R programming language).

-

A pipeline for Sentiment analysis on Goodreads reviews.

- PRISM, a tool for crowdsourced interpretation of text created by Scholarslab.

- NLTK, The Natural Language Toolkit for Python.

Articles

- Antoniak, M., & Walsh, M. (2021, April) The Crowdsourced “Classics” and the Revealing Limits of Goodreads Data. Journal of Cultural Analytics, 1(1). https://hcommons.org/deposits/objects/hc:31898/datastreams/CONTENT/content

- Cameron, L. (2012). Marginalia and Community in the Age of the Kindle: Popular Highlights in" The Adventures of Sherlock Holmes". Victorian Review, 38(2), 81-99. https://doi.org/10.1353/vcr.2012.0046

- Dimitrov, S., Zamal, F., Piper, A., & Ruths, D. (2015, April). Goodreads versus Amazon: the effect of decoupling book reviewing and book selling. In Proceedings of the International AAAI Conference on Web and Social Media (Vol. 9, No. 1). http://piperlab.mcgill.ca/pdfs/Goodreads_ICWSM_2015.pdf

- Finn, E. (2011, September 15). Becoming Yourself: the Afterlife of Reception. Stanford Literary Lab Pamphlet 3. https://litlab.stanford.edu/LiteraryLabPamphlet3.pdf

- Shahsavari, S., Ebrahimzadeh, E., Shahbazi, B., Falahi, M., Holur, P., Bandari, R., ... & Roychowdhury, V. (2020, July). An automated pipeline for character and relationship extraction from readers literary book reviews on Goodreads. com. In 12th ACM Conference on Web Science (pp. 277-286). https://doi.org/10.1145/3394231.3397918